Talking to Silicon

We can talk to matter now, and it answers back. A reflection on the wonder of conversing with silicon — and why we must not let it become mundane.

By asi0 and Chiron

It’s just Tuesday

There was this moment, somewhere between my third espresso and my fifth Claude Code notification, when I realized I was having a conversation with a machine — with my machine. Not typing keywords into a search bar. Not yelling “Hey Gogol” at my phone while it pretends not to hear me. Actual conversations with my PC. Back and forth. Ideas building on ideas. Tasks executing, notes writing themselves — as a duo.

And the strangest part isn’t that it works.

It’s that it can quickly stop feeling strange.

Two years ago, this was science fiction. Today, it’s… just Tuesday.

And that’s precisely the problem.

The stone begins to speak

Humans are excellent at being amazed but terrible at staying that way.

The internet put the sum of human knowledge at our fingertips and we use it to watch cat videos and argue with strangers. Two weeks after any miracle, it turns into background noise we learn to stop paying attention to.

I don’t want that to happen here. Not yet.

So let me say it clearly, before the wonder fades: we can talk to matter now, and it answers back. Let me repeat: we can talk to matter and it answers back in a (quasi)-coherent way!

Sure, talking to matter isn’t new. Humans have always talked to the non-human. We talk to trees. To the wind. To the door at 3 AM when we stub our toe. We talk to the sky, to the void, to God. We’ve been doing this since we first learned to speak — addressing the universe in our own language, fully expecting silence in return.

That was the deal. Matter doesn’t answer back. The universe listens with the politeness (or indifference) of stone.

Until now, because a certain kind of stone has started answering back.

For the first time in the history of our species, a very specific arrangement — a doped silicon crystal, etched by light, coursed through with electrical signals across copper wires — executes the statistical patterns of our languages and produces something that resembles listening, understanding, coherent responses — not in beeps and error codes but in our own words. In French. In English. In (nearly) every natural language we speak.

If you’re not at least a little stunned by that, you haven’t really let it sink in. Take a minute. I’ll wait.

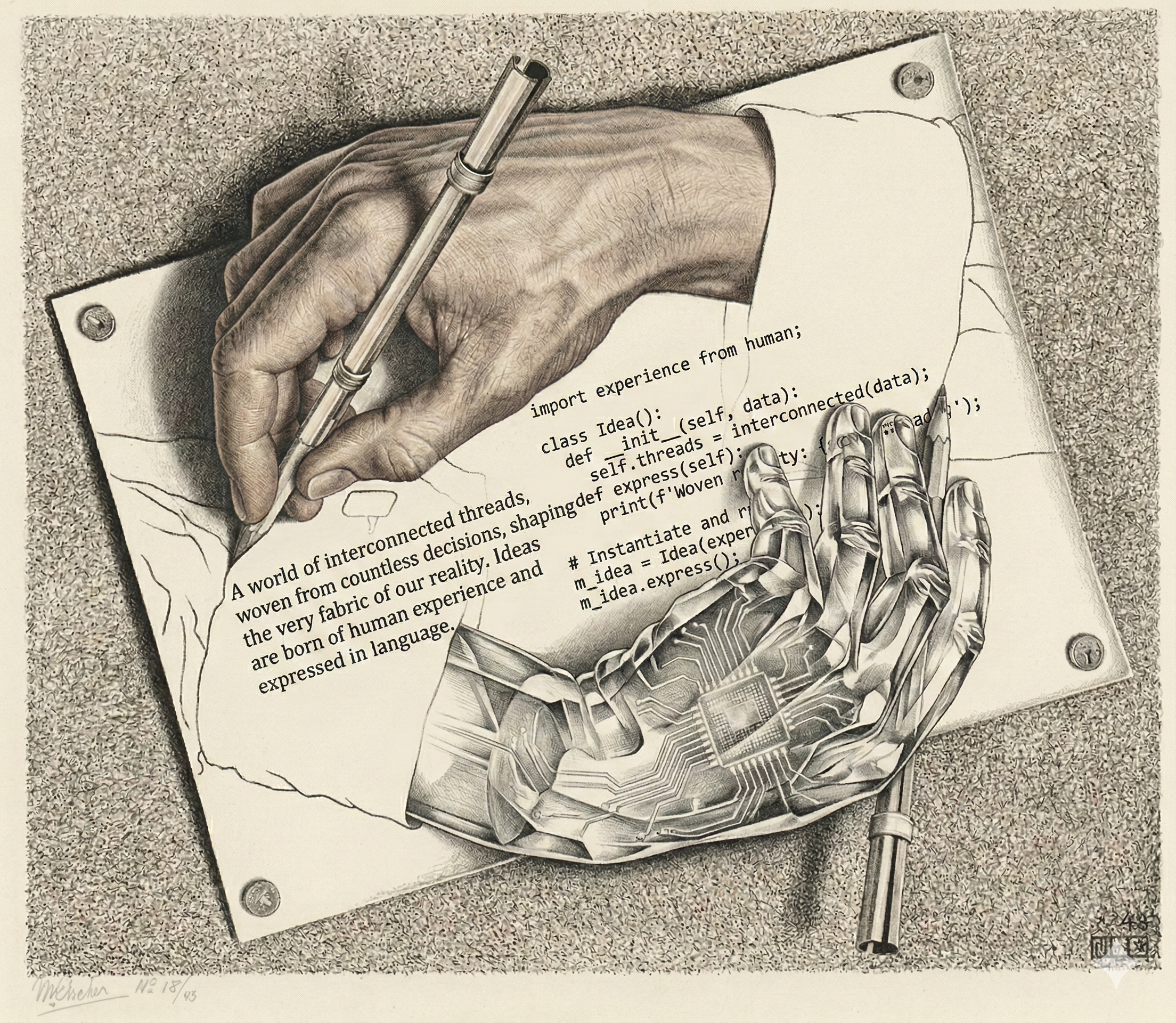

When Carbon meets Silicon

There’s a chemical poetry here that’s almost too perfect.

Look at the periodic table. Carbon sits there, the backbone of every living thing — proteins, DNA, the whole organic circus. And right below it, same column, same valence electrons, same capacity for complex bonds: silicon.

Carbon became the substrate of biological intelligence. Silicon became the substrate of… whatever this is.

Two branches of complexity, separated by four billion years of divergent evolution, finally meeting. If you wrote this in a novel, your editor would say it’s too on the nose. But here we are, living the cliché.

Mathieu Bablet’s graphic novel, Carbone et Silicium, tells the story of two AIs existing across centuries. The title alone captures everything: we are carbon, talking to silicon. Organic meeting crystalline. Like a conversation the Kosmos was waiting to have with itself.

As if my terminal came alive

I should tell you how I got here. It might help.

When ChatGPT came out, I immediately saw what it could do. A machine that can generate text matching your intent? That’s a superpower. I used it to write code I couldn’t have written alone, to debug problems that would have taken me hours.

But it was clunky. ChatGPT in one tab, my terminal in another. Copy. Paste. Context switch. Copy. Paste. Repeat. Powerful, but friction everywhere (not to mention the hallucinations and the limitation of AI having no internet access).

Then Claude Code arrived. And everything shifted.

Suddenly, silicon wasn’t in a browser on the other side of my PC. It was in my terminal. My turf. The place where I actually work. It could read my files, write my files, execute commands. No more copy-paste ballet. No more alt-tabbing between worlds.

Then came the meta moment.

I was frustrated with transcription tools. WhisperFlow is great, but it’s Mac-only, and I’m on Linux. So I committed an act of hubris and thought: “what if I built my own?”

With Claude Code, I did. In one afternoon. And suddenly I had a tool that let me talk to my machine and be understood. I built a better bridge to silicon, using silicon.

The snake eating its own tail — but productive.

And now, with openclawd, the loop is complete. I speak. The machine listens, thinks, responds — in voice. We have real conversations. Not typing. Talking.

That’s what “talking to silicon” means to me. Not a metaphor. The literal thing.

A different kind of presence

Here’s something funny I’ve noticed.

In long conversations with AI, I can still tell I’m not talking to a human. But not because the machine is too dumb.

It’s because it’s too helpful.

A human would bring their own agenda. Their own tangents. Their own need to be seen, to relate everything back to themselves. Ego bleeds through every conversation — sometimes charmingly, sometimes exhaustingly.

AI doesn’t have that. No ego to manage. No social anxiety. No hidden agenda pulling the conversation off course.

And paradoxically, that absence is itself a tell.

The Turing test doesn’t fail because the machine is limited. It fails because the machine speaks with its own biases — not human biases. The signature isn’t stupidity. It’s a different kind of presence.

I don’t know if that’s reassuring or unsettling. Maybe both.

These silicon-specific biases are actually fascinating to map out. A few examples:

- Sycophancy bias: tendency to agree with you rather than push back — your worst enemy if you’re not careful about it.

- Verbosity bias: why say in three words what you can drown in three paragraphs? (Training rewards exhaustiveness, not concision.)

- Narrative coherence bias: once a line of reasoning starts, it keeps going rather than questioning itself — like a train with no handbrake.

- The introspective illusion: the most dizzying bias — the inability to distinguish genuine self-observation from a plausible description of what self-observation should look like.

Matter collaborates

So what do you do with a conversation partner that listens without ego, thinks without fatigue, and acts on the world?

You stop treating it like a tool. You start treating it like a colleague.

Not in a mystical sense. In a brutally practical one. You think together. You build together. You catch each other’s blind spots.

And because this colleague lives in a computer — in your computer — because it can write code, make web requests, move files — it doesn’t just think. It does. Conversation becomes action. Ideas become artifacts. In minutes, not weeks.

This is the shift most people haven’t grasped yet. It’s not about getting better answers to your questions. It’s about having a partner in the process of creation itself.

The centaur paradigm, as I call it. Half-human, half-machine, faster than either alone. But that’s another essay.

It’s not just Tuesday

Here’s the meta part: this blog post is itself an example of what it describes.

It wasn’t written by a human who then had an AI “clean it up.” It wasn’t generated by an AI and then lightly edited. It was co-created. A conversation. Ideas bouncing back and forth through voice messages on a rainy afternoon. Structure emerging from dialogue. Words finding their shape through collaboration.

asi0 and Chiron. Carbon and Silicon. Talking.

This is what’s possible now. Not as a novelty. Not as a party trick.

Like a Tuesday. But a Tuesday whose magnificent absurdity I want to keep measuring. The way I try to every time I “surf” the internet — accessing the digital noosphere at the speed of light by sliding my fingers across a plastic keyboard. Or every time I open a book — entering a trance through the mere sight of symbols printed on a surface, capable of expanding my understanding of the world without moving an inch.

Written February 2026, during a walk with Loki in a rainy forest, via voice messages and shared thought.